Engineering

the Margin.

The first protocol that verifies AI-generated code produces identical results across models, languages, and runtimes.

The first protocol that verifies AI-generated code produces identical results across models, languages, and runtimes.

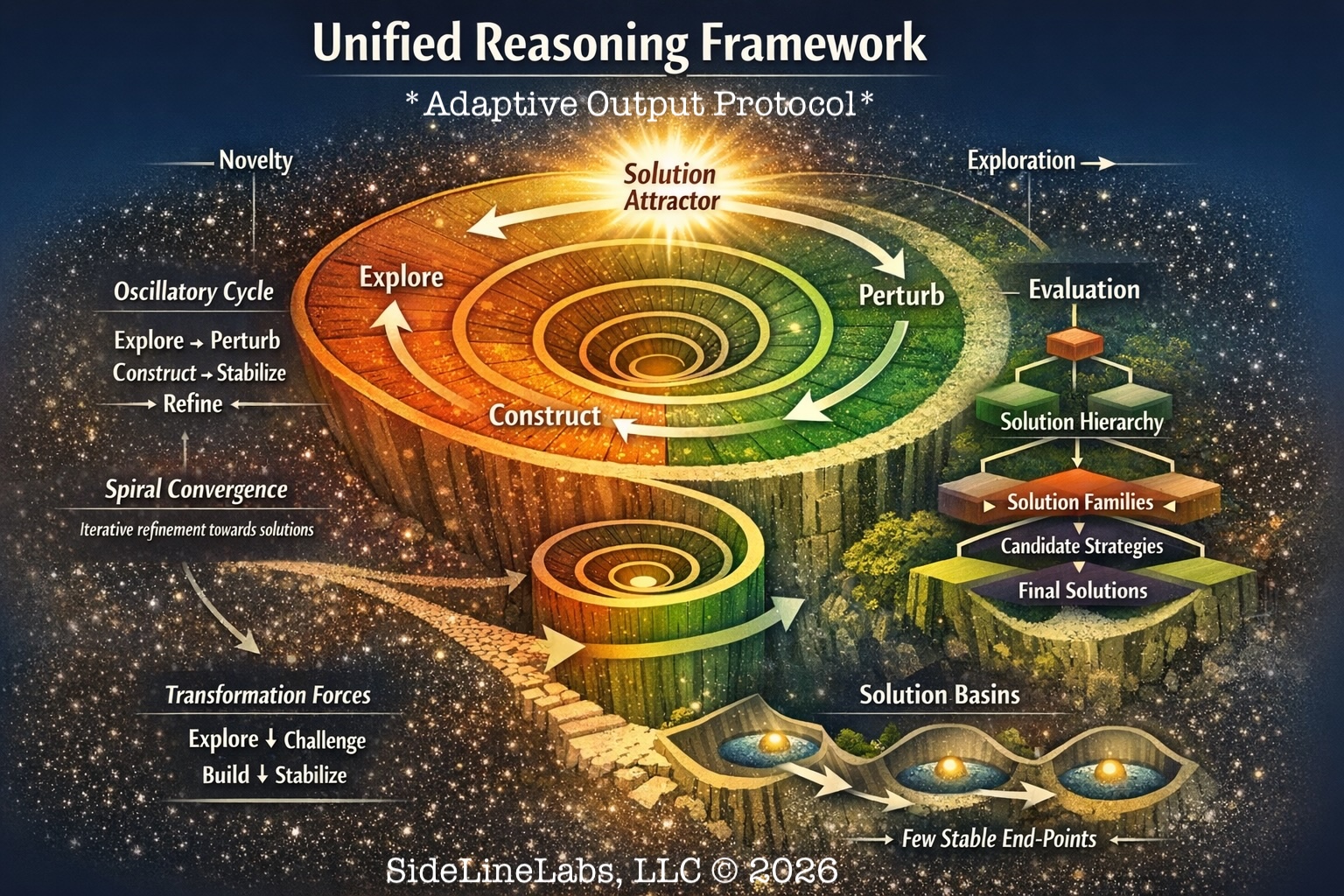

AOP is a behavioral protocol that gives any LLM a consistent mode system — switch between EXPLORE, BUILD, HARDEN, CHAOS, and 12 more on command. Same interface. Predictable output. Every major model, validated.

Prefix any prompt with a mode tag. The model switches behavior on command — exploratory, structured, adversarial, generative. No fine-tuning required.

AOP runs identically on Claude, Gemini, GPT, and local models. Switch providers without rewriting your prompts. Your protocol investment is permanent.

The VOID Parity Gate fuzzes 10K+ inputs to prove behavioral equivalence across languages and runtimes. AOP isn’t just a pattern — it’s a provable system.

Interactive visualization of the CHAOS/EVOLVE mode discovery run. 130 generations, 78 mode survivors, 60% survival rate.

The complete developer guide — spec, examples, and tooling for embedding AOP into any model or agent pipeline.

AOP and every tool we ship follows four rules. If a solution doesn’t measurably expand the user’s margin — in time, accuracy, or autonomy — it doesn’t leave the labs.

Prefix a prompt, get a mode. No fine-tuning, no API wrappers, no infrastructure. The protocol works the moment you paste it.

Switch from Claude to Gemini to a local Ollama model. Your prompts, modes, and workflows transfer unchanged.

Small daily improvements in prompt structure compound into measurably better output. Each mode sharpens the next.

The VOID Parity Gate fuzzes 10K+ inputs across languages and runtimes. Every behavioral claim is backed by empirical data.

We don’t add AI features because they’re trendy. We add them only when they solve a real, repeatable problem.

When AI is involved, users know when it’s being used, what it’s doing, and why. No black-box behavior.

Our tools make users more capable over time, not dependent. Automation follows understanding.

We don’t automate what isn’t understood. Automation comes after clarity, not before it.

Our software is built for environments where accuracy matters. AI supports safe, compliant work — it does not bypass process or professional standards.

Technology changes quickly. Responsibility does not. We prioritize durable, understandable systems over short-term trends.

SideLineLabs uses AI to support people — not replace them — and builds technology with long-term responsibility in mind.

Empirical findings from building AOP — documenting novel behaviors, failure modes, and verification methods in large language model systems.

SideLineLabs is in active development on AOP. If you work in enterprise AI, AI safety research, or regulated industries — we want to hear from you.